No rigorous mathematical treatment will be given to this interesting topic. A number of selected examples taken from scientific journals and monographs are subject of study in this chapter. The similarity with simple linear regression is obvious, simply making the weighting factors w i = 1. Compact formulae for the weighted least squares calculation of the a 0 (intercept) and a 1 (slope) parameters and their standard errors are shown in Table 1.

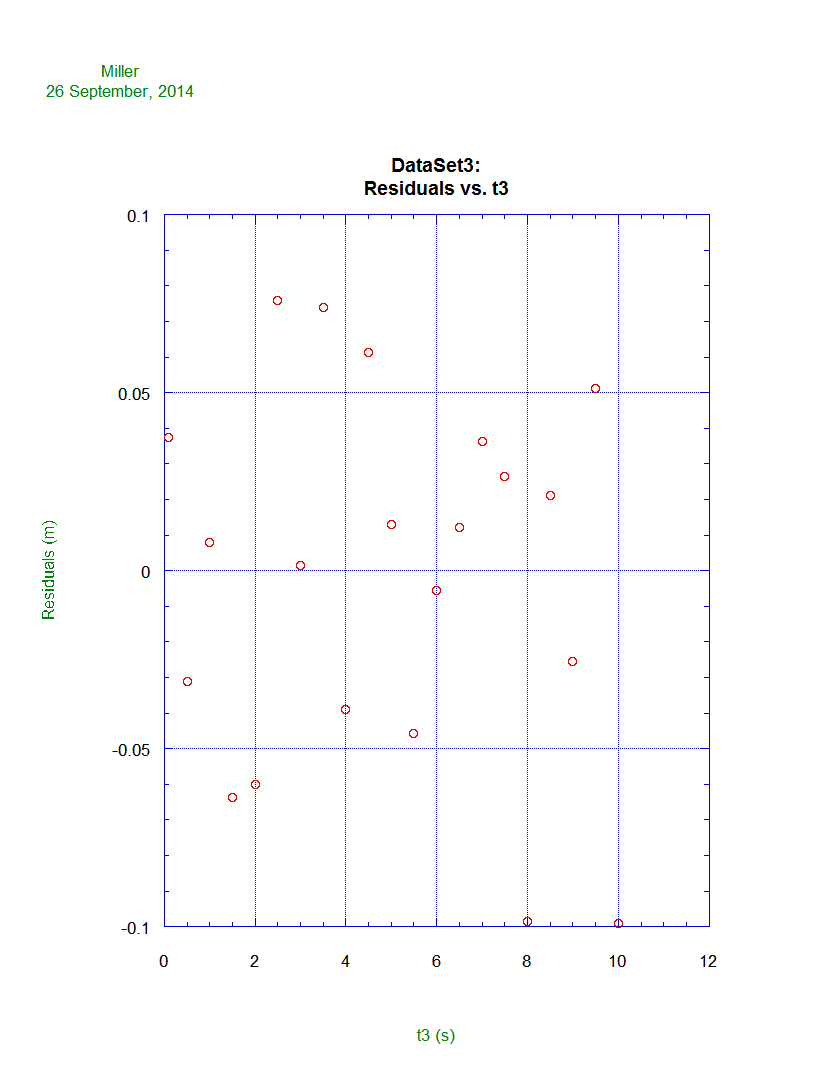

The purpose of this chapter is to provide an overview of checking the underlying assumptions (errors normally distributed with zero mean and constant variance ( σ i 2), being independent one of each other) in a regression analysis, via the use of basic residual plot, such as plots of residuals versus the independent variable x. Several examples taken from scientific journals and monographs are selected dealing with linearity, calibration, heteroscedastic data, errors in the model, transforming data, time‐order analysis and non‐linear calibration curves. When dealing with small samples, the use of the graphic techniques can be very useful. Although there are numerical statistical means of verifying observed discrepancies, statisticians often prefer a visual examination of residual graphs as a more informative and certainly more convenient methodology. The residuals should show a trend that tends to confirm the assumptions made in performing the regression analysis, or failing them should not show a tendency that denies them. Residuals play an essential role in regression diagnostics no analysis is being complete without a thorough examination of residuals. The aim of this chapter is to show checking the underlying assumptions (the errors are independent, have a zero mean, a constant variance and follows a normal distribution) in a regression analysis, mainly fitting a straight‐line model to experimental data, via the residual plots.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed